This week’s Times Higher has another article about Benjamin Ginsberg’s book The Fall of the Faculty: The Rise of the All-Administrative University and Why It Matters. It’s written about the US, but it has obvious implications for the UK t00, where complaints from some academics about “bureaucrats” are far from uncommon. Whether it’s that administrators are taking over, or that the tail is wagging the dog, or that we’re all too expensive/have too much power/are too numerous, such complaints are far from uncommon in the UK.

This week’s Times Higher has another article about Benjamin Ginsberg’s book The Fall of the Faculty: The Rise of the All-Administrative University and Why It Matters. It’s written about the US, but it has obvious implications for the UK t00, where complaints from some academics about “bureaucrats” are far from uncommon. Whether it’s that administrators are taking over, or that the tail is wagging the dog, or that we’re all too expensive/have too much power/are too numerous, such complaints are far from uncommon in the UK.

There’s two ways, I think, in which I would like to respond to Ginsberg and his ilk. And it’s the “ilk” I’m more interested, as I haven’t read his book and don’t intend to.

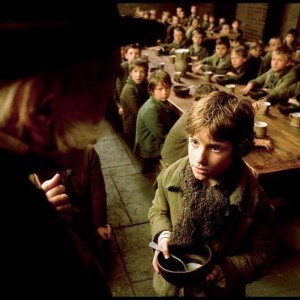

The first way I could respond is to write a critical blog post, probably with at least one reference to the classic ‘what have the Romans ever done for us?‘ scene in Monty Python’s Life of Brian (“But apart from recruiting our students, hiring our researchers, fixing our computers, booking our conferences, balancing the books, and timetabling our classes, what have administrators ever done for us?”). It would probably involve a kind of riposte-by-parody – there are plenty of things I could say about academics based upon stereotypes and a lack of understanding, insight, or empathy into what their roles actually entail. Something about having summers off, being unable or unwilling or unable to complete even the most basic administrative tasks, being totally devoid of any common sense, rarely if ever turning up at work… etcetera and so on. I might even be tempted to chuck in an anecdote or two, like the time when I had to explain to an absolutely furious Prof exactly why good governance meant that I wasn’t allowed to simply write a cheque – on demand – on the university’s behalf to anyone she chose to nominate.

The second way of responding is to consider whether Ginsberg and other critics might have a point.

On the whole, I don’t think they do, and I’ll say why later on. But clearly, reading the views attributed to Ginsberg, some of the comments that I’ve heard over the years, and the kind of comments that get posted below articles like Paul Greatrix’s defence of “back office” staff (also in the Times Higher), there’s an awful lot of anger and resentment out there – barely constrained fury in some cases. And rather than simply dismissing it, I think it’s worthwhile for non-academics to reflect on that anger, and to consider whether we’re guilty of any of the sins of which we’re accused.

I didn’t want to be a university administrator when I was growing up. It’s something I fell into almost by accident. I had decided against “progressing” my research from MPhil to PhD, because although I was confident that I could complete a PhD (I passed my MPhil without corrections), I was much less confident about the job market. Was I good enough to be an academic? Maybe. Did I want it enough? No. But it gave me a level of understanding and insight into – and a huge amount of respect for – those who did want it enough. Two more years (at least) living like a student? Being willing to up sticks and move to the other end of the country or the other side of the world for a ten month temporary contract? Thanks, but not for me. I was ready to move towards putting down roots. I was all set to go off and start teacher training when a job at Keele University came up that caught my eye. And that job was on what was then known as the “academic related” scale. And that’s how I saw myself, and still do. Academic related.

My point is, I didn’t sign up to be obstructive, to wield power over academics, to build an ’empire’, or – worst of all – to be a jobsworth. I’ve never had a role where I’ve actually had formal authority over academics, but I have had roles where I’ve been responsible for setting up and running approval processes – for conference funding, for sabbatical leave, for the submission of research grant applications, and (at the moment) for ethical approval for research. When I had managerial responsibility for an academic unit, my aim was for academics to do academic tasks, and for managers and administrators to do managerial/academic tasks. That’s how I used to explain my former role – in terms of what tasks that previously fell to academics would now fall to me. Nevertheless, academics were filling in forms and following administrative processes designed and implemented by me. While that’s not power, it’s responsibility. I’m giving them things to do which are only instrumentally related to their primary goal of research. I am contributing to their administrative workload, and it’s down to me to make sure that anything I introduce is justified and proportionate, and that any systems I’m responsible for are as efficient as possible.

So when I hear complaints about ‘administration’ and ‘bureaucracy’ and university managers, whether those complaints are very specific or very general, I hope I’ll always respond by questioning and checking what I do, and by at least being open to the possibility that the critics have a point.

However, I don’t think most of these complaints are aimed at the likes of me. Partly because I’ve always had good feedback from academics (though what they say behind my back I have no idea….) but mainly because I’ve always been based in a School or Institute – I’ve never had a role in a central service department. Thus my work tends to be more visible and more understood. I have the opportunity to build relationships with academics because we interact on a variety of different issues on a semi-regular basis, which generally doesn’t happen for those based centrally.

And I think it’s those based centrally who usually get the worst flack in these kinds of debates. I’m not immune from the odd grumble about central service departments myself in the past when I’ve not got what I wanted from them when I want it. But if I’m honest, I have to accept that I don’t have a good understanding of what it is they do, what their priorities are, and what kinds of pressure they’re under. And I try to remind myself of that. I wonder how many people who posted critical comments on Paul’s article would actually be able to give a good account of what (say) the Registry actually does? I would imagine that relatively few of the academic critics have very much experience of management at any level in a large and complex organisation.

I’m not sure, however, that all of the critics bother to remind themselves of this. It’s similar to the kinds of complaints about the civil service and the public sector in general. ‘Faceless bureaucrats’ is an interesting and revealing term – what it really means is that you, the critic, don’t know them and don’t know or understand what it is they do. ‘Non-job’ is another favourite of mine. There many sectors that I don’t understand. and which have job titles and job descriptions which make no sense to me, but I’m not so lacking on imagination or so arrogant to assume that that means that they’re “non-jobs”. In fact, I’d say the belief that there are large groups of administrators – whether in universities or elsewhere – who exist only to make work for themselves and to expand their ’empire’, is a belief bordering on conspiracy theory. Especially in the absence of evidence. And extraordinary claims require extraordinary evidence. That’s not to say that there is no scope for efficiencies, of course, but that’s a different scale of response entirely.

By all means, let’s make sure that non-academic staff keep a relentless focus on the core mission of the university. Let’s question what we do, and consider how we could reduce the burden on academic staff, and be open to the possibility that the critics have a point.

But let’s not be too quick to denigrate what we don’t understand. And let’s not mistake ‘Yes Prime Minster’ for a hard-hitting documentary….

In the previous

In the previous